White House Aims to Cut AI Risks to National Security with New Regulations

A new executive order tasks agencies with creating standards for secure AI and developing ‘game-changing cyber protections.’

The White House’s recent artificial intelligence executive order seeks to manage risks that the nascent technology poses to national security, IT systems and economic security.

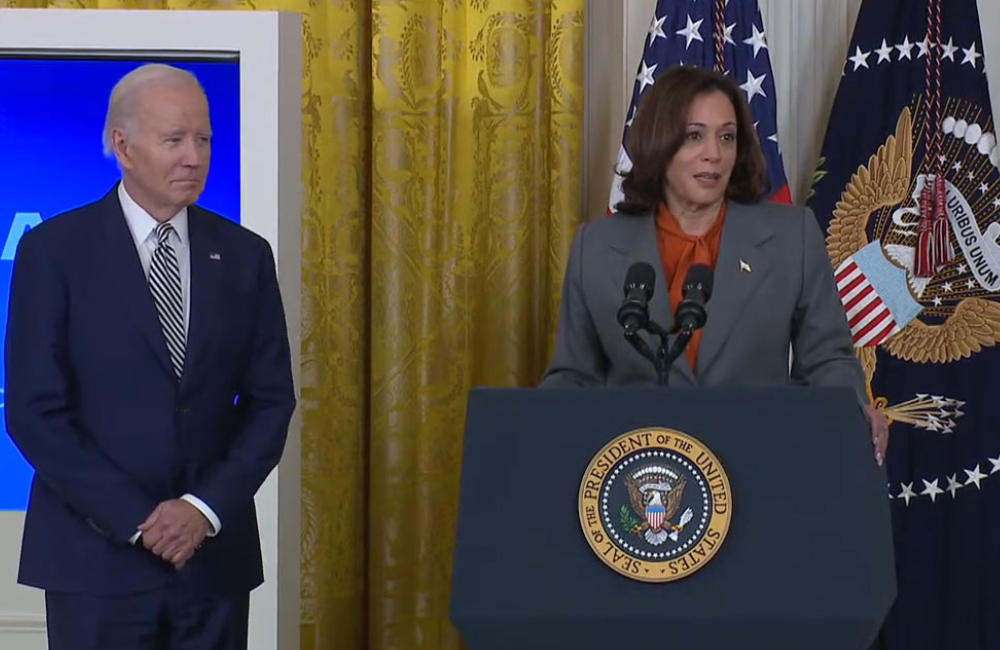

The executive order is being touted by the Biden-Harris administration as “the most sweeping actions ever taken to protect Americans from the potential risks of AI systems.” It directs federal agencies to develop standards for secure AI and address the risks that the emerging technology may pose to chemical, biological, radiological, nuclear and cybersecurity systems.

“This order builds on the critical steps we’ve already taken,” President Joe Biden said on Monday. “With today’s executive order I’ll soon be signing, I’m determined to do everything in my power to promote and demand responsible innovation.”

Through the Defense Production Act, companies developing large-scale AI systems potentially posing national security risks are now required to share their safety test results with the federal government.

The executive order also directs the Defense Department and the Department of Homeland Security to complete an operational pilot project that will deploy AI capabilities, including large language models to help find and remediate vulnerabilities in the federal government’s software, systems and networks.

“In the wrong hands, AI can make it easier for hackers to exploit vulnerabilities in the software that makes our society run. That’s why I’m directing the Department of Defense and Department of Homeland Security, both of them, to develop game-changing cyber protections that will make our computers and our critical infrastructure more secure than it is today,” Biden said.

Requirements for the Defense Department

The executive order directs the secretary of defense to assess ways AI can increase biosecurity risks and make recommendations on how to mitigate those risks. Risks from generative AI models trained on biological data are of particular concern.

Another section of the executive order says the secretary of defense, alongside the director of national intelligence and secretaries of energy, commerce and homeland security, will develop initial guidelines for performing security reviews. These guidelines will include reviews that identify risks posed by the release of federal data that could help develop offensive cyber capabilities or chemical, biological, radiological and nuclear weapons.

The order also directs the Defense Department to address gaps in AI talent. It requires the DOD to make recommendations on how to hire noncitizens with certain skill sets, including at the Science and Technology Reinvention Laboratories. The secretary of defense will also make recommendations to streamline processes for accessing certain classified information for noncitizens.

The executive order outlines a critical role for the Department of Homeland Security in mitigating AI’s risks to critical infrastructure. It calls for the agency to develop secure standards, tools and tests for AI deployment.

“[The President’s Executive Order] directs DHS to manage AI in critical infrastructure and cyberspace, promote the adoption of AI safety standards globally, reduce the risk of AI’s use to create weapons of mass destruction, combat AI-related intellectual property theft, and ensure our immigration system attracts talent to develop responsible AI in the United States,” Secretary of Homeland Security Alejandro Mayorkas said in a statement.

The plan tasks Mayorkas, who established the Department’s AI Task Force in April 2023 and appointed the department’s first chief AI officer, with chairing the AI Safety and Security Advisory Board. DHS already uses AI in agency operations like stopping online child sex abuse, fentanyl interdiction and assessing damage after disasters.

In addition, the White House Chief of Staff and the National Security Council are directed to develop a national security memorandum to ensure the military and the intelligence community use AI safely, effectively and ethically in their missions.

In addition, the secretary of defense, along with the secretary of veterans affairs and the secretary of health and human services are required to establish an HHS AI task force and develop a plan for responsible use of AI in the health and human services sector, including drug and device safety, research, healthcare delivery and financing.

“If the White House and Executive branch achieve even half of the provisions, it will be a vital step forward to safeguard AI,” Heather Frase, senior fellow of AI assessment at Georgetown University’s Center for Security and Emerging Technology, told GovCIO Media and Research in an emailed statement. “The next step beyond developing domestic standards and protections is ensuring that our allies and other countries around the world also adopt similar norms to enable interoperability and the benefits of AI–I’m hopeful that the UK AI Summit and other multilateral efforts like the G7 will carry forward the EO’s momentum.”

Executive Action is Not the Final Word

While the executive order has significant power, legislation will be required to regulate the technology.

“This executive order represents bold action, but we still need Congress to act,” Biden said.

Sen. Mark Warner, D-Va., chairman of the Senate Select Committee on Intelligence and co-chair of the Senate Cybersecurity Caucus, said some of the provisions “just scratch the surface.”

“I am impressed by the breadth of this Executive Order – with sections devoted to increasing AI workforce inside and outside of government, federal procurement, and global engagement. I am also happy to see a number of sections that closely align with my efforts around AI safety and security and federal government’s use of AI,” Warner said.

“At the same time, many of these just scratch the surface – particularly in areas like health care and competition policy. Other areas overlap pending bipartisan legislation, such as the provision related to national security use of AI, which duplicates some of the work in the past two Intel Authorization Acts related to AI governance. While this is a good step forward, we need additional legislative measures,” he added.

Rep. Don Beyer, D-Va., vice chair of the bipartisan Congressional AI Caucus, said that legislation is necessary to develop standards for safe and secure AI.

“President Biden’s Executive Order on AI is an ambitiously comprehensive strategy for responsible innovation that builds on previous efforts, including voluntary commitments from leading companies, to ensure the safe, secure, and trustworthy development of AI,” Beyer said in a statement. “We know, however, that there are limits to what the Executive Branch can do on its own and in the long term, it is necessary for Congress to step up and legislate strong standards for equity, bias, risk management, and consumer protection.”

This is a carousel with manually rotating slides. Use Next and Previous buttons to navigate or jump to a slide with the slide dots

-

Federal Agencies Make the Case for Quantum

Amid development of emerging technologies like AI and machine learning, leaders see promise in quantum computing.

6m read -

Cyber Incident Reporting Regulation Takes Shape

An upcoming CISA rule aims to harmonize cyber incident reporting requirements for critical infrastructure entities.

5m read -

Connectivity Drives Future of Defense

The Defense Department is strategizing new operating concepts ahead of future joint force operations.

8m read -

5 Predictions for AI in Government Technology

Agencies are setting plans in motion not only to integrate AI into their enterprises, but also ensuring the data that power these systems are fair.

41m watch